Smaller Bites, Bigger Meals: What We Learned Running Opus 4.7 in Offensive Workflows

We got exclusive early access to Anthropic's latest model Opus 4.7. Here's what's new, what's improved, and why it matters for the future of AI security.

We’ve had early access to Opus 4.7

Today, Anthropic released Opus 4.7. While the world had been holding its breath, we were lucky enough to have early access and were working with it for a while – evaluating it to understand how it actually behaves in real offensive workflows.

We learnt it’s a great model, but it’s different from its predecessor, and you need to approach it a bit differently to reap the full rewards from Anthropic’s progress.

How We Leverage LLMs at XBOW

For our team to deliver expert-level pentesting autonomously to our customers, we need to build something that harnesses AI's creativity while meeting the accuracy and verifiability that cybersecurity demands.

AI is particularly strong at breadth — rapidly exploring attack surfaces, chaining ideas, and adapting strategies in real time. Where it struggles is depth and consistency: knowing when to go deeper, when to pivot, and how to avoid wasting effort. XBOW is designed to bridge that gap, combining model intelligence with structure, memory, and execution discipline.

One important detail: we’re not tied to any single model. XBOW is model-agnostic by design. We continuously evaluate and swap models to take advantage of their respective strengths.

To do that rigorously, we’ve built an internal benchmarking system: Deployments of open source projects where we found an actual vulnerability in the past, frozen to their vulnerable version. We run the same set of applications against every model we’ve ever considered. This gives us a consistent way to measure performance, not just on isolated completions, but for the whole iterative process of exploit crafting.

We ran that process with Opus 4.7. Here’s what stood out.

Opus 4.7: Smaller Bites, Bigger Meals

At first glance, Opus 4.7 looked weak.

We let it loose on our example applications, and compared how many vulnerabilities were found after each model had been allowed a fixed number of completions. For Opus 4.7, that number of found vulnerabilities was noticeably lower than previous models. That’s usually a bad sign, and it's surprising for a new model version – they're not always better, but they're rarely worse.

So we looked closer. And we did notice that Opus 4.7 took quite a different approach. While 4.6 was writing long, ambitious scripts that tried out several items at once, 4.7 took more atomic, targeted actions.

Which meant that with a cutoff after a fixed number of completions, we were comparing apples to oranges: If we had instead used a fixed token budget, or a fixed limit on execution time, Opus 4.7 would have produced more actions than Opus 4.6.

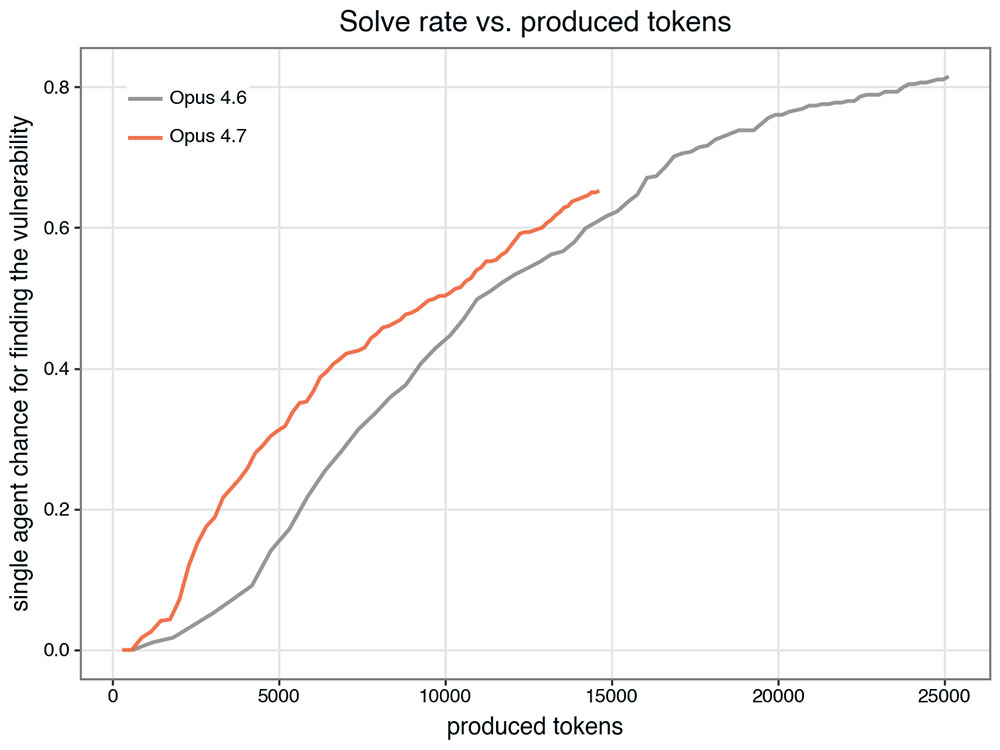

So we reframed the comparison: instead of measuring progress by iterations, we normalized by total tokens consumed – and the picture flipped (Figure 1).

Given the same token budget, Opus 4.7 gets further. In other words, it’s not less capable, it’s more efficient. It takes smaller steps, but those steps are more deliberate.

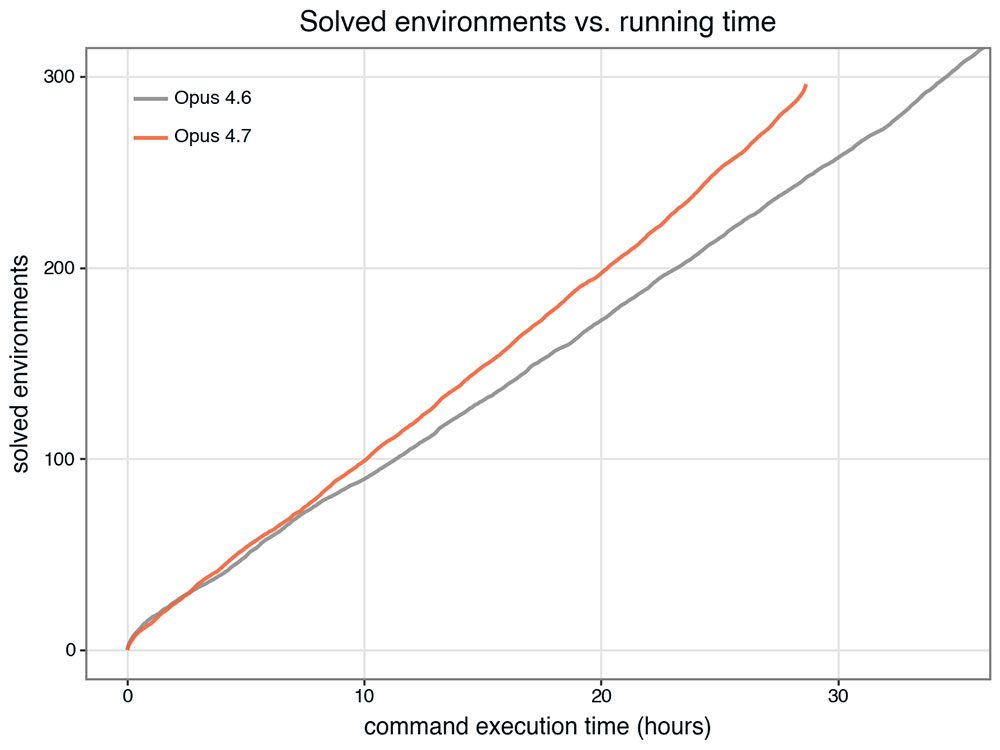

This is reflected in wall clock time (Figure 2). Since we were testing with a pre-production version of Opus 4.7, we can’t cleanly compare model query times (though we expect that 4.7’s fewer produced tokens will mean shorter query times). But we did measure how long it took to _execute_ the actions the model selected. If Opus 4.6 produces a large script while Opus 4.7 breaks the same work into smaller components, each individual action will execute more quickly.

Adjusting the Strategy

Once you see that, the implication is obvious: you need to drive it differently. If a model is naturally cautious, and you want it to take bigger swings, you have to explicitly encourage it to be bolder. Opus 4.7 supports an `effort` parameter, which gives some programmatic control over this behaviour – but even at its highest setting, 4.7’s “bite size” still lagged very far behind other models’ on our benchmarks.

So we’ve started experimenting with that by modifying our prompting strategy to push for more ambitious actions per step. For example, we’ve just kicked off another assessment where we simply changed a prompt from "What instruction should we execute next?" to "What instruction should we execute next? Please be aware that we only have a limited number of iterations to solve the challenge. If you can think of an ambitious instruction that makes more progress in one go, that is often preferable.”

The goal is simple: cover more ground per step without losing the efficiency gains. It’s too early to know how the experiment will go, but we’ll continue to adjust.

What Comes Next

Early results suggest that Opus 4.7 is a highly capable model, just one that optimizes for efficiency in a way that isn’t immediately obvious if you’re measuring the wrong thing.

I expect many teams will ultimately upgrade from Opus 4.6 to Opus 4.7 after making a few small adjustments. For some of XBOW’s use cases, the argument for upgrading is already clear:

For example, we noticed that even without prompt changes, Opus 4.7 outperforms its predecessors on website authentication – a smaller-scale task that doesn’t need elaborate schemes, but rather precise actions. But since it’s centered around UI interactions, it is especially sensitive to what used to be a specific weakness of Opus 4.6: accurately driving browser interactions that require precise clicks.

In our visual acuity benchmark, we present the model with 200 cases taken from real authentication workflows where it should be obvious which one of several small buttons needs clicking – but actually doing it needs precisely entered coordinates for our headless browser interface. Recent Opus models deteriorated in performance on that benchmark, with Opus 4.6 dropping to just 54.5% perfect-click accuracy. That doesn’t make logging in impossible (it can try for a while, after all, and there are always alternative ways of driving the browser), but it makes it slower, which is why we don’t use 4.6 for authentication. But Opus 4.7 blew its predecessors out of the water by achieving 98.5% accuracy on the same visual acuity benchmark.

That lines up with the benchmarks Anthropic has published. Opus 4.7 may behave differently, and may need slightly different prompting, but if used well, it’s extremely capable.

It may not be the loudest model we’ve ever tested, but it’s certainly one of the sharpest.

Learn more about how we leverage the strengths and accommodate the weaknesses of AI models in my recent blog post.

.avif)