Key Considerations for AI Pentesting

AI pentesting helps scale offensive security by automating discovery, exploitation, and validation, with solutions ranging from assisted tools to fully autonomous agents.

If you’re looking to augment and accelerate your offensive security with an AI-based pentesting solution, here are some key things to consider and questions to ask.

Why you need to move beyond traditional manual penetration testing

Penetration testing is by far the most accurate and in-depth security assessment method. Nothing compares to the results generated by a skilled human pentester probing a system with the mindset of an attacker. The problem is scaling that testing and those results – especially with AI powering both developers and attackers.

AI-led pentesting has emerged to fill this gap.

Flavors of AI pentesting

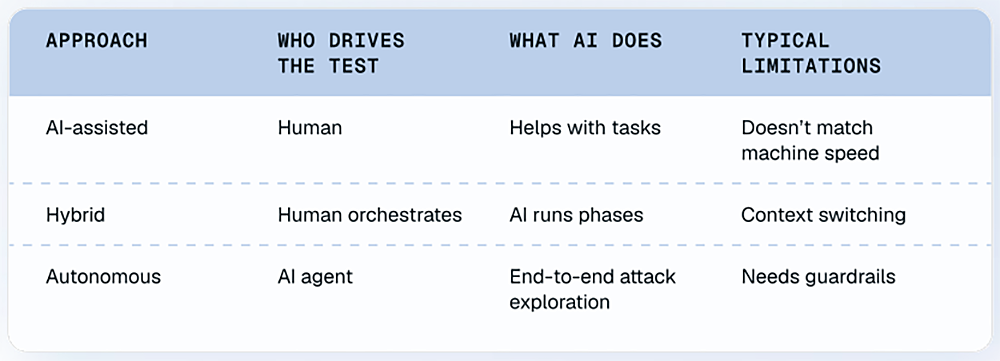

Ultimately, most AI pentesting solutions today break down roughly into three categories, AI-assisted pentesting, hybrid/AI-augmented pentesting, and AI-led autonomous pentesting.

AI-assisted pentesting

With AI-assisted pentesting, humans drive the testing. AI helps with:

- Vulnerability discovery

- Payload generation

- Log parsing

- Report drafting

AI tools are used to accelerate specific actions of the pentesting process, like scanning for and alerting on known vulnerabilities, but a human is heavily involved, orchestrating and planning the overall testing strategy and managing each individual step.

Hybrid/AI-augmented pentesting

In this type of AI pentesting, AI gets more autonomy, but the human is still leading and orchestrating. In hybrid/AI-augmented pentesting, AI handles discrete stages on its own, such as:

- Discovery automation

- Attack path analysis

- Vulnerability prioritization

Humans interject after each phase, validating findings and hypotheses before testing continues.

AI-led autonomous pentesting

With this method, AI takes the lead, and humans play more of an oversight role.

AI agents:

- Map attack surface

- Form hypotheses

- Execute multi-step exploit chains

- Validate findings

- Draft reports

Humans:

- Set scope

- Review results

- Handle edge-case logic abuse

- Investigate more challenging, creative exploits

What to look for in an AI pentesting solution

If you are evaluating AI-based pentesting solutions, here is a list of items to consider.

Validation and accuracy

Noisy, inaccurate results are already plaguing security teams. Before you add to the backlog unnecessarily, ask the following about any potential AI pentesting solutions:

- Does it prove exploitation, or just theorize?

- Can it unearth net-new vulns? Could it find a zero day, or is it just looking for existing patterns?

- Does it reduce false positives? What’s the false-positive rate, and what steps does the solution take to reduce it?

- Do the findings have enough detail to allow you to reproduce them?

- Can you upload source code, SAST findings? Can you add source code to improve results?

Autonomy and adaptation

The promise of AI pentesting is boosting security without boosting headcount. Determine exactly how autonomous an AI pentesting solution is:

- Can it chain multi-step exploits?

- Does it adapt based on response behavior?

- Is it hypothesis-driven or signature-based?

Safety and governance

Can you control an AI pentesting solution and keep it from affecting production systems? Make sure to determine:

- What guardrails exist? Are there default guardrails? If so,can you customize them?

- Is there a kill switch?

- How do you control the scope of the testing?

- Can you control the hours testing happens, and the strength of the test? How can you ensure it won’t affect your production systems?

- What data is retained (requests/responses, creds, tokens, findings)?

- Is customer data used for training (opt-in/opt-out)?

- Can you run in an isolated environment / private deployment?

- How are secrets handled?

Operational integration

Will the solution blend into your current workflows? Does it work with your hosting requirements? Ask:

- Can you access the solution via API?

- How does it integrate into your CI/CD? (or does it at all)?

- Are there ticketing integrations (Jira, ServiceNow)?

- Can developers initiate tests?

- Can it pentest incrementally to focus only on newly added features?

- How is it hosted? What are the options? Can you self-host? Can you isolate your data?

Scalability and economics

Scale is the biggest AI pentesting differentiator. But can the solution scale the way you need it to? Consider:

- How many apps can be tested concurrently?

- Is the testing continuous or scheduled?

- How long do tests take?

- Where is human interaction required?

Transparency and reporting

Can you fix what’s found, and satisfy auditors? Make sure you understand:

- Is the reporting actionable? Is there clear remediation guidance?

- Is there enough detail to allow teams to reproduce the issue?

- Is the output audit-ready? For which regulations?

Get the full AI pentesting buyer’s guide

For more details on how AI pentesting works, the different types of solutions, and evaluation criteria, download our new buyer’s guide, What to Look for in AI Pentesting.

.avif)